Scaling Kapwing to 100k+ Projects a Day

After more than a decade of engineering experience, I joined Kapwing in 2018 as the first employee. Now, I lead the engineering organization and have touched nearly every part of the stack. In the time I've been here Kapwing has grown from a few scrappy engineers pushing production code to a single server into a mature organization supporting over 100k projects a day.

I like to describe Kapwing as a browser-based, cloud-based video editor. The browser based part is obvious if you have used the product, but the cloud part is hidden by design. If you aren't familiar with the limitations of your laptop's hardware or the challenges of video processing, it might not be clear what is happening on your device versus what is happening on our servers. In this article I will detail how we use a mix of client side and server side processing to produce videos quickly, reliably, and cost effectively.

Video Processing: the Basics

In the analog film world, a video is simply a roll of film with one image per frame. A 10-second video with 30 frames per second requires a roll of 300 images. This is fine if you are simply feeding a film strip into a projector, but is woefully inefficient for digital storage and transmission. A 10 second video at 30 frames per second must be significantly smaller than 300 images of equal resolution in order to be streamed and stored in a cost effective and performant manner.

Video encoders compress the images into key frames (aka I-Frames), which contain the entire image (think a jpeg or a png), and intermediate frames (aka P-Frames or B-Frames), which contain the diff between an adjacent frame and the current frame. For a video with minimal movement from frame to frame the diff can be extremely small. This process tends to be compute intensive but also highly parallelizable. An increase in compute cores will lead to a corresponding decrease in encoding speed.

Client vs Server

Unfortunately, while modern devices (laptops, tablets, phones) have improved in many ways, the number and power of their cores has not, at least not to the extent that server hardware has. In fact, many of these devices have been optimized for low power consumption at the expense of compute power. The tasks that do require parallelism, such as graphics rendering and video decoding, are offloaded to special purpose hardware accelerators (most often GPUs), which are of minimal utility to most video encoders.

On the other hand, servers have continued to squeeze more and more cores onto a single machine, and the cores have increased their performance at a modest pace. For video encoding, the performance gap between a commodity server and a top of the line laptop has grown and will continue to grow in the near future.

From a performance point of view, server-side processing is a no brainer. Server side processing also reduces our validation space, as we only have to design for a limited amount of HW/SW environments, where as the amount of client devices, OS versions, browsers, etc. is basically infinite.

The server-side approach, however, does have its drawbacks. Video processing requires a network connection, and for very small jobs the latency in contacting the server and transferring files could overwhelm the gains in processing performance. The largest negative by far is price. Running jobs on a customer's computer is free (for the app), while encoding videos on a cloud service provider can be extremely costly, especially if done inefficiently.

Today, Kapwing processes the vast majority of our videos on our servers. Client-side processing is reserved for images and for very small jobs. A significant amount of engineering effort goes towards doing this in a cost effective, resource efficient manner. This is a key component of our technical moat. In the following sections, I will describe the specific tasks our backend performs, as well as the techniques we use to optimize these flows.

Ingestion vs Processing

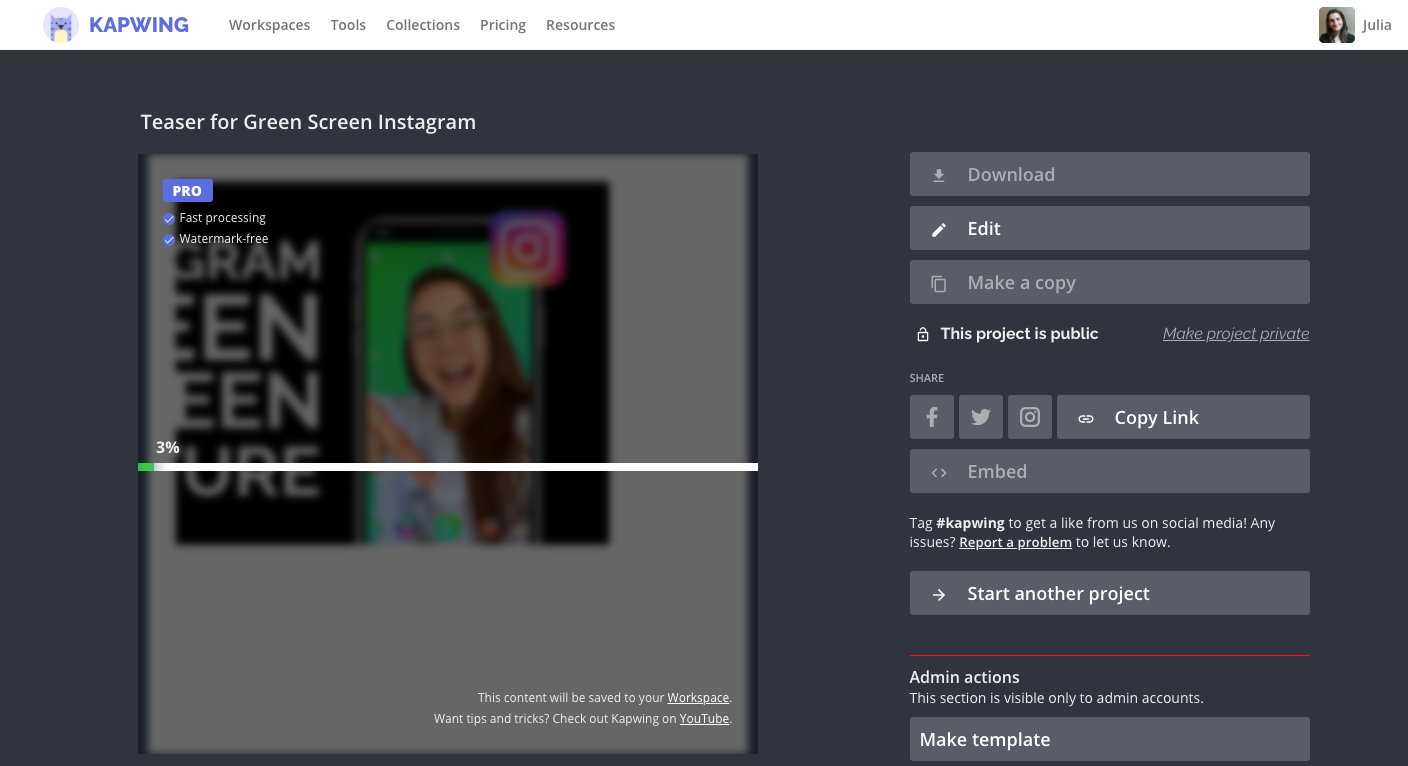

Kapwing's backend performs two basic functions: ingestion and processing. Judged by lines of code, ingestion is the far simpler task, but because it is responsible for bringing files from the wild into our system, it accounts for a disproportionate amount of development effort. Once files have successfully been brought into our system, > 99.9% of projects export successfully, however large amounts of effort go into increasing efficiency, increasing performance, and supporting new features.

Kapwing takes files from a large amount of sources. Specifically:

- You can upload a video, GIF, audio or image file from your device

- You can paste a link from various platforms (youtube, twitter, tiktok etc)

- You can record yourself or your screen

- You can use one of our plugins to import videos, gifs and images

All of these sources bring a variety of media into our system with various levels of browser support. For example:

- iPhones will often create HEVC videos, which are not supported on Chrome or Firefox

- Laptop screen reccorders often create WEBMs, which are not supported on Safari

- Twitter links are often HLS, which is not supported on Chrome or Firefox

These compatibility issues force us to convert certain input files before they can be previewed in browser, but there is actually a larger concern that affects more files.

Most video files are optimized for high-quality playback. A user viewing a video on youtube or instagram is not concerned with precise seek-ability, syncing with an audio track, or smoothly transitioning from one clip to the next. On the contrary, a user editing a video is extremely concerned with these things. As a result the files that come from your phone's camera often have excessively high bitrates. Kapwing transcodes these files to a lower resolution/bitrate in order to optimize for in-browser preview. Note that this is just for previewing, the files used to process a user's final video will always be the original high-quality inputs.

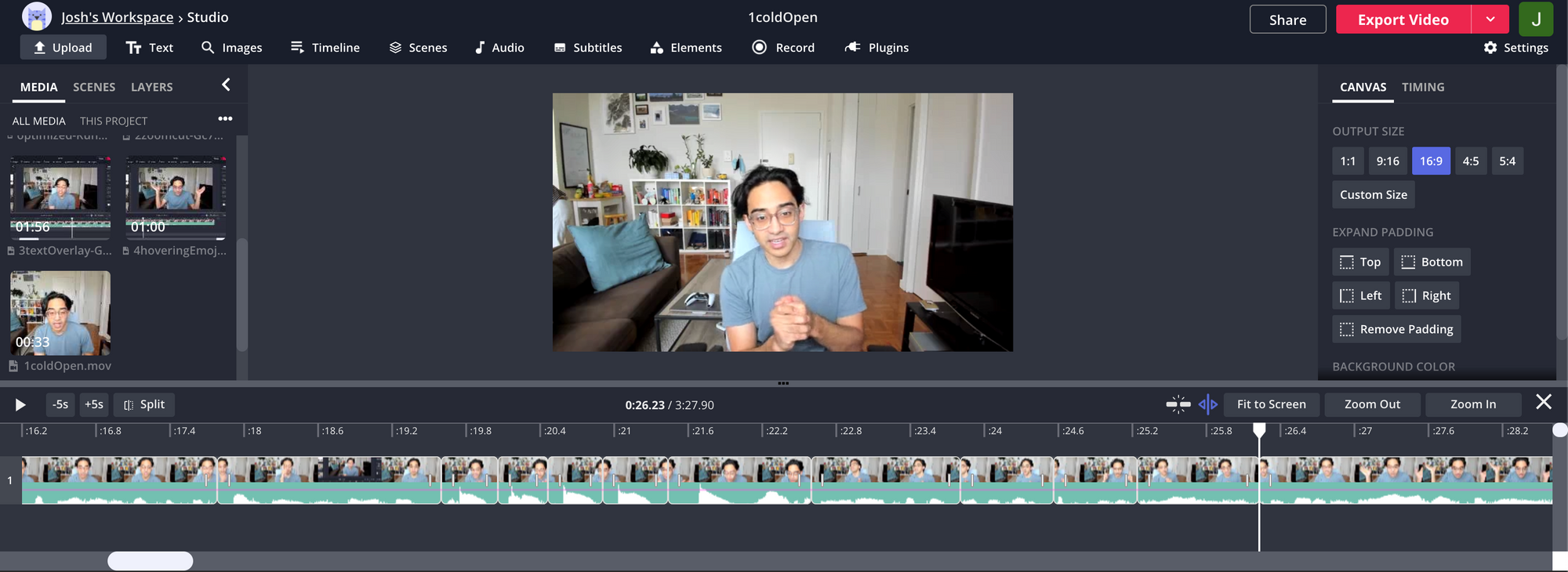

On a typical day, ingestion makes up a relatively small fraction of our compute costs. The remainder is the actual video processing. Kapwing is a very powerful editor. You can trim videos, concatenate videos, and add images, audio, subtitles, GIFs, text, and animations. In the Kapwing Studio, all these different items are layers, and the job of the processing pipeline is to combine these layers into a video.

The combination itself is done with ffmpeg, an open source utility often called the swiss army knife of video processing. At a high level the processing pipeline can be broken up into the following stages:

- download and preprocess layers: In this stage the media is downloaded to the server and we perform per layer processing, specifically we perform:

- Trims

- Speed changes

- File normalizations: For certain videos/performance optimizations we must ensure that all media have the same codec/framerate/audio sample rate.

Note that this is all done in parallel. We use thread pools to prevent overwhelming the system, more on this in later sections

- Splice: This is where we combine layers, including non-video layers. There is some preprocessing required of the non-video layers, but this is generally pretty quick. For most jobs the majority of the work is done in an FFMPEG filter complex

- Scene combination/Post processing: Kapwing supports multi-scene videos. Stage two is done on a per scene basis, and these scenes are combined in stage three. We attempt to do this with ffmpegs concat demuxer, which does not require re-encoding, but in certain cases we cant and must fall back to a filter complex. stage 3 also contains things like thumbnail generation, GIF conversion (for GIF outputs) and metric collection/db update

We have many optimizations that we do, but two in particular stick out as being crucial to our most valuable flows:

- Split optimization: Many users upload a small amount of videos and use kapwing to do 'splice editing'. They trim out different sections and rearrange others. An example of such a video is below. Each section is a layer. These types of videos typically have many layers but very few inputs, and each input is typically the same size. These projects make use of ffmpeg's concat demuxer to append clips together without re-encoding. The videos may have added music,. text or images which require us to eventually re-encode with a filter complex, but by pre-combining layers we can significantly reduce the total processing time

2. Subtitle optimization: Kapwing can support many layers in a video, but it is typically only a subtitled video that will have > 500 layers. In a typical workflow a user uploads a video, uses our free auto-transcribe functionality, reviews the results and then exports. While FFMPEG does have text support, it is quite difficult to achieve fidelity with the frontend using this feature. What we do instead is convert each subtitle to a PNG.

Next, we run the subtitles and underlying video through a filter complex; however, ffmpeg's filter complex cannot reliably work with > a few hundred layers. In order to make this work, we have to batch process the layers. This batching is in turn made more efficient by first batch processing the subtitles, 50 at a time, and then adding in the actual video content at the very end. With this flow Kapwing can reliably support over 1000 subtitles in a video.

Infrastructure

All the software optimizations in the world will not get us where we need to be if we are not making efficient use of our resources. Kapwing's video processing lives primarily on Google Cloud, and we use auto scaling work groups to ensure that we have the compute resources we need when and only when we need them. When a job comes into our application server we read its attributes and choose between one of several clusters:

- Standard cluster: this is the default

- Premium cluster: Premium users are routed to higher core, higher memory machines with lower utilization targets.

- GPU cluster: this is for jobs that require GPU processing, most notably our remove background functionality.

- Canary cluster: This is where releases are staged. 10-15% of jobs are sent here. During release we initially deploy to this cluster, monitor for a day or so, then do a full deploy. This process allows us to mitigate release risk while lowering overall QA cycles.

Each cluster lives behind a load balancer, and the machines on the clusters are containerized using docker. The containers run Gunicorn, which itself forks off several flask servers. Gunicorn allows us to run each flask server in a single process without getting bogged down by pythons global interpreter lock ( the infamous GIL ), which serializes python and limits parallelization. When we started Kapwing we forked off a process for each request, but this required us to also spawn a new db connection per request, which lead to connection spikes and downtime. In our existing architecture each flask server has a fixed size db connection pool, allowing us to handle large traffic spikes without overloading the system.

While our code is in python, the overwhelming majority of the computation is done by ffmpeg subprocesses, which are typically 16 threads each. Our clusters scale based on cpu utilization, but this can vary greatly within a job. Effective load balancing/autoscaling is particularly challenging as our jobs have highly varied resource utilization that cannot always be predicted at the moment of dispatch. Specifically:

- Transcode times for a given video depend on its length, resolution, bitrate, encoding etc.

- Certain videos can be trimmed and concatenated with no reencoding, however its not trivial to determine this pre-dispatch. Typically we must attempt this method and then validate results. If the results are not what we expect we spawn off a much more compute intense job

- Certain files require transcoding in order to work well with the other files in the job, but once again its difficult to determine this pre-dispatch.

One way we deal with this is with thread pools, which ensure that even if we do spawn off too many subprocesses at once, the system will queue them up and only run N at a time (N being determined by machine size). In the future we must develop a more sophisticated scaling metric in order to maximize utilization without risk of machine overload or long queue times.

Conclusion

Kapwing has grown from a scrappy meme maker to a full-featured cloud-based video editor in a little under four years. A key component of this growth has been our ability to process long and complex videos reliably and quickly, regardless of a users device. To achieve this we have invested heavily in cloud processing. This means optimizing the way we process videos as well as the underlying infrastructure where the processing occurs.

If you'd like to join us on this journey, feel free to apply here. We're hiring for Senior Full-Stack Engineers, especially people interested in video engineering, machine vision, and the future of the cloud.