How We Achieved 100% Adoption of AI Coding Agents

What it took to get every employee to commit code in Q1 2026 and the impact AI coding agents have on our startup.

In Q1 2026, every employee at Kapwing committed code to production. Our designer. Our sales lead. Our content writers. Our support reps. We’re a ~25-person company with about a dozen engineers; the other half of the company had never opened a PR before January.

This is the story of how we did it, what surprised us, and what it means for how we build software going forward.

Why We Bet on AI Coding Agents

As of this winter, I'd been Claude Code heavily at work, and it seemed impossible to ignore the productivity gap between people who could use them and those who couldn’t. Like other CEOs I've spoken with, I felt the existential pressure to extend these capabilities to everyone on our team.

So, we set a goal to get 100% of our team committing code in Q1. Here were three reasons I wanted to roll out AI coding agents for everyone:

- Engineering velocity. At any given moment, we have a long backlog of low-complexity but high-value work like updating copy on a landing page or adding a new internal tool. This work is interruptive to engineers focused on complex problems. With Codex, our team can address these stories and service customers while engineers zone in on more complex projects, meaning we’re more efficient at every level.

- Building AI products requires using AI deeply. At Kapwing, we’re building for creators who work with AI. If our content lead and support team haven’t used AI agents themselves, they have a different relationship to the technology than our customers do. Closing that gap makes us more informed. I also think it’s made our non-technical teammates feel more included rather than anxious about what AI means for their roles.

- Every team sees problems engineering doesn’t. Our sales lead was sitting on a list of enterprise accessibility issues that had been deprioritized for months. Our designer wanted to revise the copy on our About page. Non-technical employees often don’t report fixable problems because they’ve internalized that problems go into a queue, not into production. I wanted to reverse that assumption.

The Rollout: Three Phases Over Five Months

Phase 1: Infrastructure and Early Adopters (October–November)

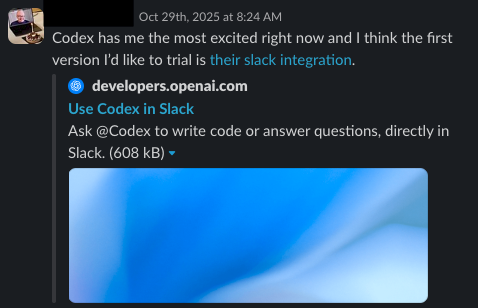

We started in late October by putting one engineering lead in charge of the project. His job was to research the tooling landscape, make a recommendation, and own the rollout. He landed on Codex (OpenAI’s coding agent) and built a roadmap.

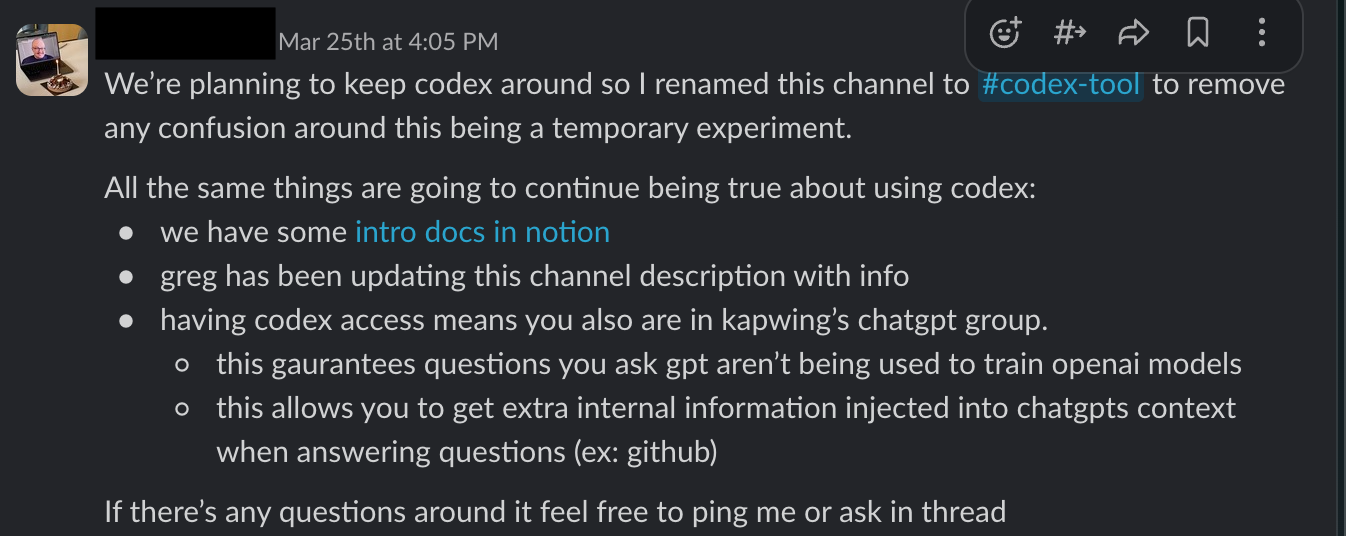

In early November, we set up a ChatGPT Enterprise organization and added everyone to it, which gave us a secure, managed instance for both ChatGPT and Codex access. We wired up the Codex/Slack integration and gave our QA leads and PMs access.

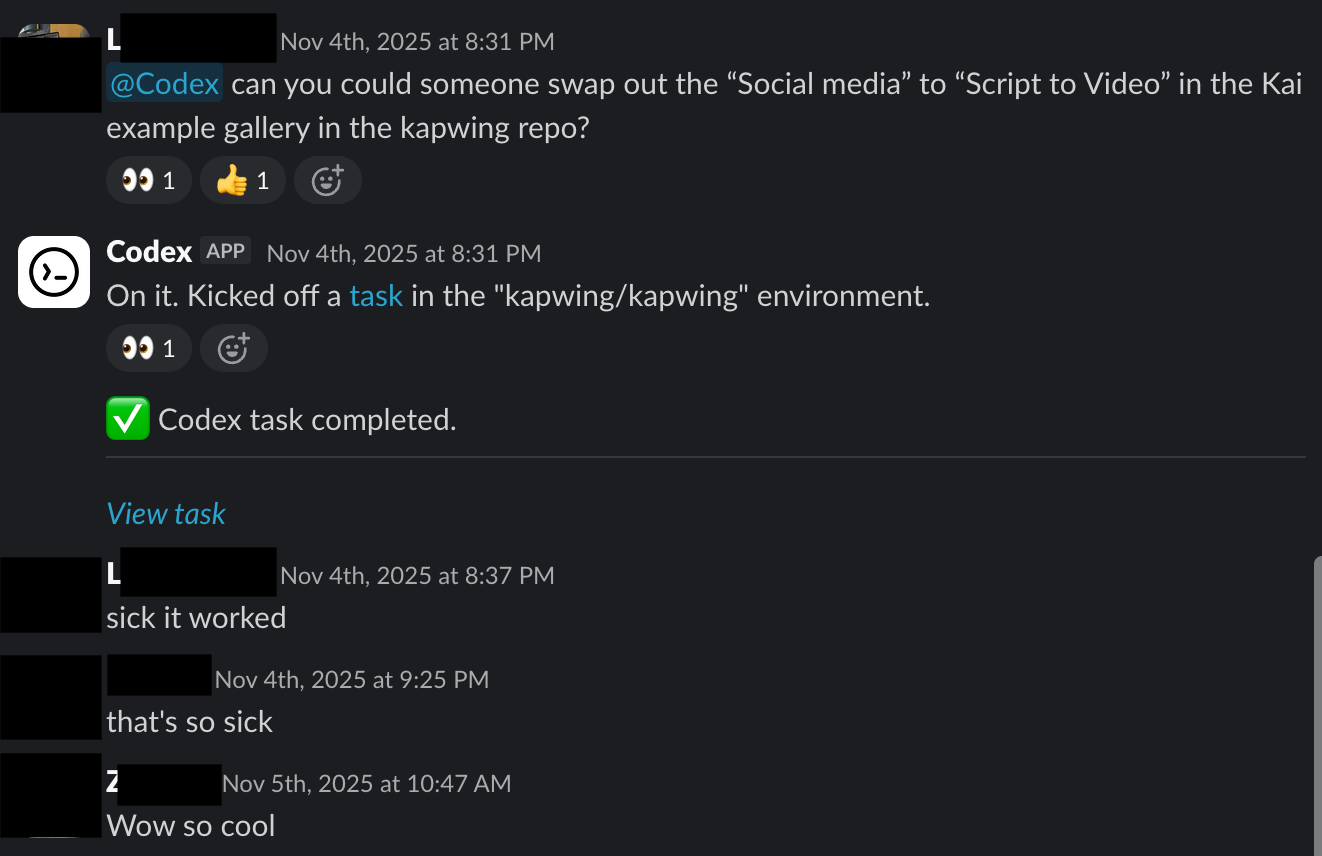

With the Codex+Slack connection, users can start a Codex task by mentioning it in a Slack message that contains enough context to tell it what to do. Codex will kick off a task, linked in the Slack message, and post a message when it has completed.

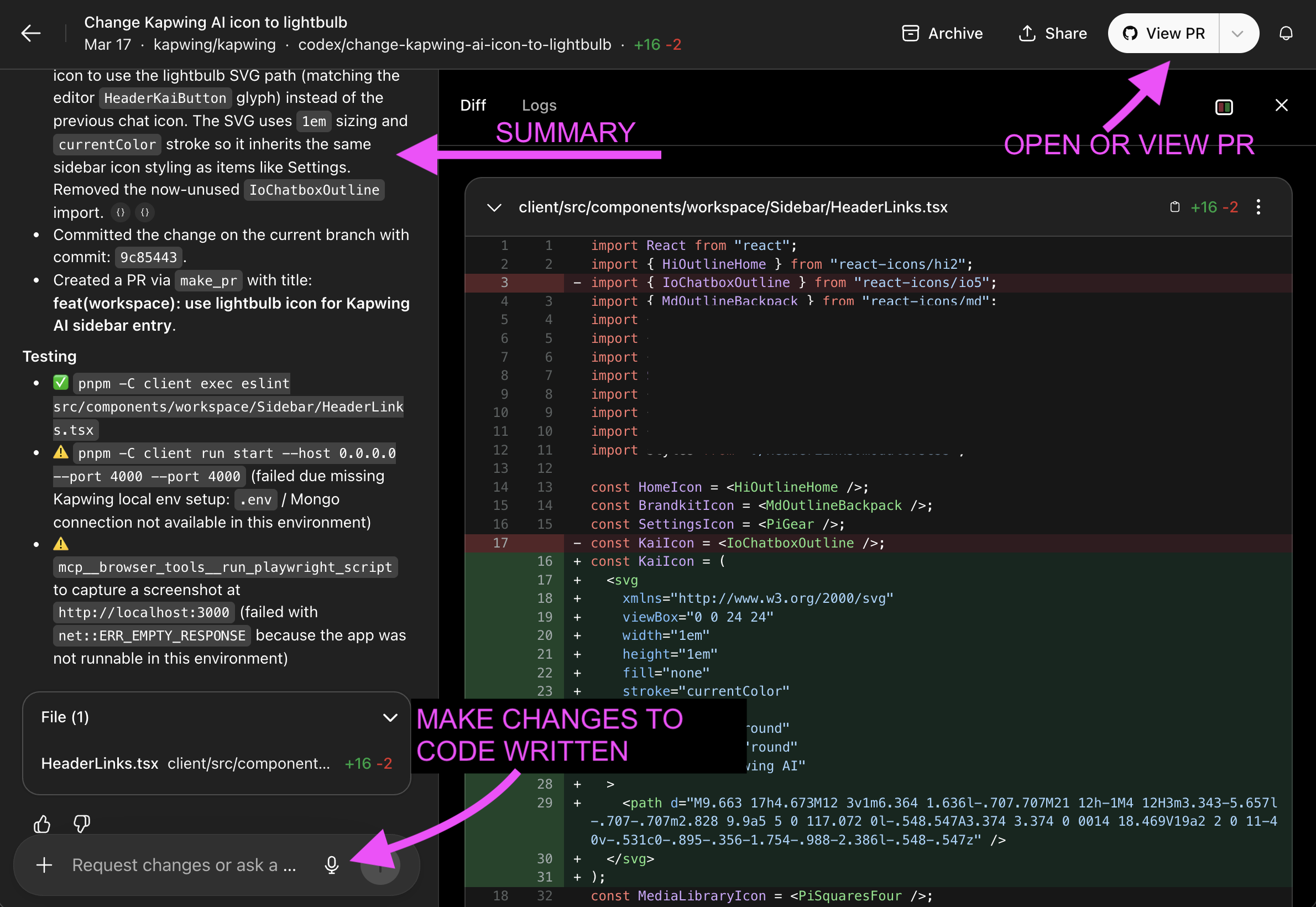

Within the Codex task, you can read a summary of the code changes on the left side and enter prompts if you notice problems when testing. You can also open a Github PR directly or view the existing PR to deploy and test it.

For adoption, the most important early move was to get company leaders building in public. Our engineering lead created a Slack channel for people interested in Codex and wrote documentation in Notion explaining how to use it. Within days, our Head of Product had joined, sent her first requests, and started posting results. Those messages did more to convey Codex’s value than any internal announcement could have. By November 12th, she had merged the first Codex PR into production.

Phase 2: Setting the Goal and Expanding Access (February)

After the holidays, we had a working system but limited adoption. In early February, we set an explicit goal: everyone commits code by the end of Q1.

Before our mid-February Codex training, we added everyone to the GitHub repo with appropriate permissions and spun up dev machines for the new “developers," or non-engineers who needed an environment to test their changes before deploying. We found that the largest friction point for non-technical contributors was setting up their local environment to see if the changes worked. So, we enabled deployments to a dev machine via a GitHub comment, a one-line trigger that simplified deployment significantly.

Phase 3: Training, Automation, and the Last Mile (February–March)

In mid-February, I led a training session for non-technical teammates. Beforehand, we assigned each person a well-scoped story. This enabled them to try out the process without product ambiguities and have a quick win.

In early March, we connected Codex to Shortcut, our bug tracker, so agents automatically address stories we’ve tagged for them. By March 23rd, the last two teammates had deployed their changes to production.

What a Good Training Session Looks Like

I ran the initial training session for non-technical teammates myself, partly because I wanted to signal that this was a priority and partly because I’d spent enough time using these tools that I could speak to the failure modes. Here the topics that we covered in our Codex training session:

- What coding agents are (and aren’t). We spent time on mental models — Codex is good at making precise, well-scoped changes, and less reliable when the task is ambiguous or requires deep architectural judgment. Understanding that shaped how people framed their first requests.

- The full PR workflow. How to write a prompt, iterate on the output, deploy to a dev machine, test visually, and get an engineer to review before merging. We walked through each step with a real example.

- Prompting specifics. Codex performs best when you point to exact on-page elements — not “make the button bigger” but “increase the padding on the button with class `cta-primary` in `header.jsx`.” We covered how to use browser dev tools to inspect elements, identify exactly what needs to change, and use the ChatGPT <> Github integration to ask questions about the code.

- A pre-vetted story for each person. Rather than asking people to find their own first task, we assigned each non-technical teammate a story from our backlog that we’d already reviewed for complexity and scope.

- Troubleshooting. The session ended with live troubleshooting as people ran into access and connection issues. Getting those unblocked in real time, in front of the group, normalized the friction and showed that the engineering team was ready to help.

What Actually Happened

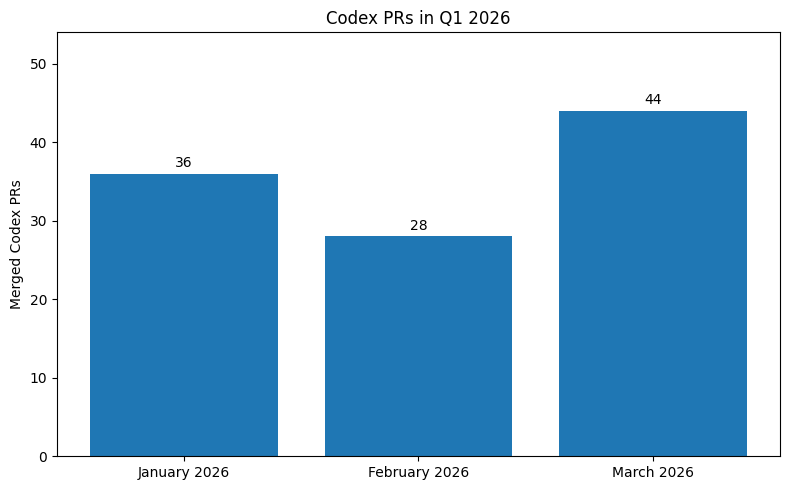

In Q1 2026, we shipped nearly 108 PRs tagged with Codex. Here's the surprising impact it had on the company:

- We eliminated bug bash. We used to run a three-day “bug bash” event once a quarter — a sprint where engineers stopped new feature work to address low-priority bugs. Our QA Manager told us in March that we don’t need bug bash anymore because the Shortcut-Codex integration handles those issues continuously. So, AI has replaced 36 days of engineering productivity per quarter — roughly half an engineering salary.

- Our QA team became one of our most productive engineering contributors. QA was already semi-technical and specialized in reproducing and precisely describing bugs. It turned out that skill transferred directly to prompting coding agents. Our QA Manager ended the quarter in the top five for most PRs committed.

- Our content team started doing interactive journalism. Our writers have started using custom HTML elements in blog posts — interactive visualizations, embedded data — that would have required an engineering request before. It’s changed what “content marketing” means for us.

- Critical incidents went down. The number of production incidents in Q1 was lower than in previous quarters. I take this as evidence that coding agents aren’t introducing meaningful rollout risk, with our current scale and our existing review infrastructure.

The software engineering role is changing. Our engineers are reviewing more PRs and spending more time on architectural decisions than before. The job is increasingly about deciding what to build, defining how to break problems into agent-sized tasks, and maintaining quality through review — less about writing every line of code themselves. I’m planning to update our engineering career ladder to reflect this.

What We’re Still Figuring Out

Coding agents do not replace product market fit. We still have technology challenges in our prompt-to-edit surface to make it a highly retentive and satisfying product. Operational leverage compounds when you’re pointed at the right things. In Q2, we have clear goals and a new bet in the works.

We’re also still running Codex and Claude Code in parallel across the team, which means inconsistent tooling and hard-to-attribute token costs. In Q2, we plan to pick one (or both).

We also plan to integrate our design system more deeply into AI-generated code, run a training session on MCPs and agent automations, and experiment with multi-agent orchestration as the tools mature.

Brave New World

We’re five months into something that feels genuinely new. The boundary between “technical” and “non-technical” at Kapwing is blurring in ways that I think are good for speed, for morale, and for the quality of the work. I’m glad we started when we did.

If you’re a company leader thinking about this: the infrastructure and process work took longer than we expected. The cultural shift happened faster.

Thanks for reading! Share this article or reach out over X (@KapwingApp) to share your own coding agent experiences as you roll it out to your own team.